From “How to Make a Bot That Isn’t Racist,” by Sarah Jeong in Motherboard (3/25/16):

“Most of my bots don’t interact with humans directly,” said Kazemi. “I actually take great care to make my bots seem as inhuman and alien as possible. If a very simple bot that doesn’t seem very human says something really bad — I still take responsibility for that — but it doesn’t hurt as much to the person on the receiving end as it would if it were a humanoid robot of some kind.”

So what does it mean to build bots ethically?

The basic takeaway is that botmakers should be thinking through the full range of possible outputs, and all the ways others can misuse their creations.

“You really have to sit down and think through the consequences,” said Kazemi. “It should go to the core of your design.”

For something like TayandYou, said Kazemi, the creators should have “just run a million iterations of it one day and read as many of them as you can. Just skim and find the stuff that you don’t like and go back and try and design it out of it.”

“It boils down to respecting that you’re in a social space, that you’re in a commons,” said Dubbin. “People talk and relate to each other and are humans to each other on Twitter so it’s worth respecting that space and not trampling all over it to spray your art on people.”

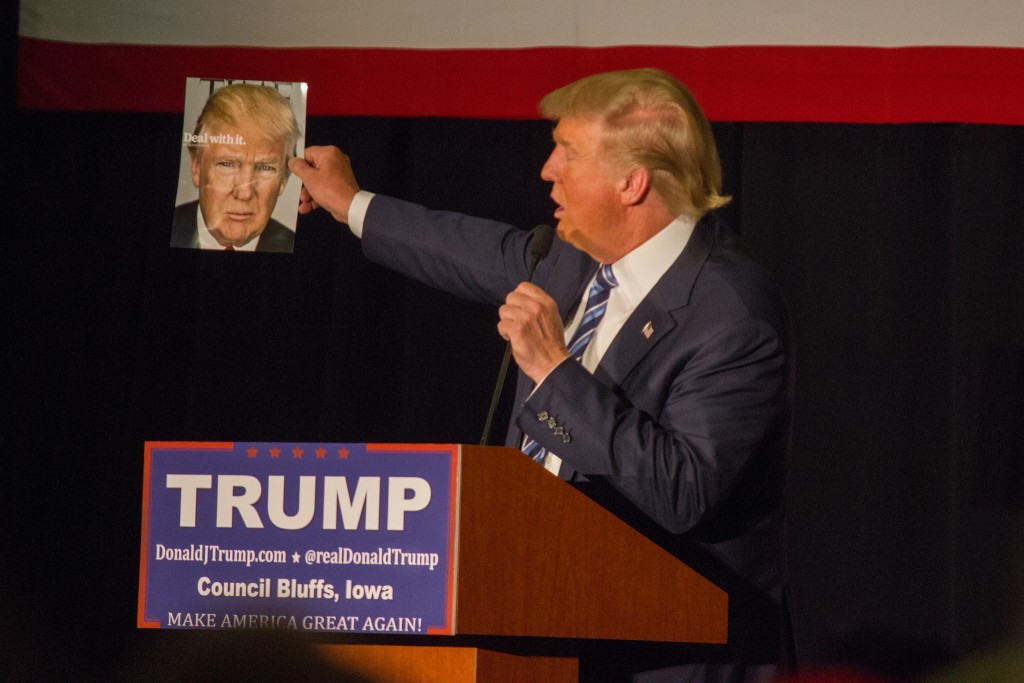

For thricedotted, TayandYou failed from the start. “You absolutely do NOT let an algorithm mindlessly devour a whole bunch of data that you haven’t vetted even a little bit,” they said. “It blows my mind, because surely they’ve been working on this for a while, surely they’ve been working with Twitter data, surely they knew this shit existed. And yet they put in absolutely no safeguards against it?!”

According to Dubbin, TayandYou’s racist devolution felt like “an unethical event” to the botmaking community. After all, it’s not like the makers are in this just to make bots that clear the incredibly low threshold of being not-racist.