- rc3.org: How programming is like golf

“No matter how easy it is to get close to the hole, you have to make those seemingly easy putts in order to finish, and the small bits at the end can wind up costing you just as much as the big chunks of progress did early in the project.” Or: First you do the first 80 percent, then you do the second 80 percent… - Papers’ Web Hopes Dim a Bit – WSJ.com

“Roughly 70% to 80% of [newspapers’] online revenue is tied to a classified ad sold in the print edition.” If true, yikes: classified is going online anyway…

Archives for April 2007

David Halberstam, RIP

The journalist, who died in a car crash near the Dumbarton Bridge here in the Bay Area, was 73. (SF Chron; Mercury News.)

I first read his 1972 masterpiece, The Best and the Brightest, as a curious teenager trying to figure out how and why our country was stuck fighting a war that could not be won on behalf of people who plainly did not want us to do so. It’s fair to say that the book shaped my view of U.S. foreign policy, and of the need to curb our government’s predilection for fighting unnecessary wars. Halberstam’s chronicle of the arrogance of power illustrated how the confidence of Kennedy’s Harvard-trained managers meshed with the cupidity of the Cold War military-industrial complex to produce the Vietnam quagmire. The title, in other words, was ironic.

In some of his later works Halberstam allowed his reputation as a Pulitzer-garlanded star to inflate his style. But The Best and the Brightest was taut and tragic. Today it reminds us that the “Vietnam complex” was not some debilitating national illness that needed to be shucked off; it represented experience of imperial power’s limits, hard-won through an ill-begotten war. How shameful that those lessons vanished from Washington so soon, and that another generation of Americans must once more seek the answers I found in Halberstam’s book.

UPDATE: This from Clyde Haberman’s Times obit:

William Prochnau, who wrote a book on the reporting of that period, “Once Upon a Distant War,” said last night that Mr. Halberstam and other American journalists then in Vietnam were incorrectly regarded by many as antiwar.

“He was not antiwar,” Mr. Prochnau said. “They were cold war children, just like me, brought up on hiding under the desk.” It was simply a case, he said, of American commanders lying to the press about what was happening in Vietnam. “They were shut out and they were lied to,” Mr. Prochnau said. And Mr. Halberstam “didn’t say, ‘You’re not telling me the truth.’ He said, ‘You’re lying.’ He didn’t mince words.”

[tags]David Halberstam, journalism[/tags]

Apple and Brooks’s Law

Apple recently announced that it had to delay the release of the next version of Mac OS X, Leopard, by a few months — too many developers had to be tossed into the effort to get the new iPhone out the door by its June release. Commenting on the delay, Paul Kedrosky wrote, “Guess what? People apparently just rediscovered that writing software is hard.”

In researching Dreaming in Code, I spent years compiling examples of people making that rediscovery. I’m less obsessive about it these days, but stories like this one still cause a little alarm to ring in my brain. They tend to come in clumps: Recently, there was the Blackberry blackout, caused by a buggy software upgrade; or the Mars Global Surveyor, given up for lost in January, which, the LA Times recently reported, was doomed by a cascading failure started by a single bad command.

Kedrosky suggests the possibility of a Brooks’s Law-style problem on Apple’s hands, if the company has tried to speed up a late iPhone software schedule by redeploying legions of OS X developers onto the project. If that’s the case, then we’d likely see even further slippage from the iPhone project, which would then cause further delays for Leopard.

This is the sort of thing that always seemed to happen at Apple in the early and mid-’90s, and has rarely happened in Steve Jobs Era II. I write “rarely,” not “never,” because I recall this saga of “a Mythical Man Month disaster” on the Aperture team. If the tale is accurate, Apple threw 130 developers at a till-then-20-person team, with predictable painful results. We’ll maintain a Brooks’ Law Watch on Apple as the news continues to unfold.

UPDATE: Welcome, Daring Fireball and Reddit readers! And to respond to one consistent criticism: Sure, iPhone isn’t late yet, but Apple is explicitly saying it needed to add more developers to the project to meet its original deadline. If that all works out dandy, then the Brooks’s Law alarm will turn out to have been unwarranted. Most likely, given Apple’s discipline, the company will ship iPhone, with its software, when it says it will. What we won’t and can’t know is whether, and if so how much, the shipping product has been scaled back. And sure, of course this is all conjecture. Conjecture is what we have, given Apple’s locked-down secrecy.

[tags]apple, leopard, os x, software delays, brooks’s law[/tags]

Links for April 20th

- Wii and DS Turn Also-Ran Nintendo Into Winner in Videogames Business – WSJ.com: Guess I was wrong about the Wii, which I derided for its absurd name!

- BBC NEWS | Business | Blackberry reveals failure cause: Surprise — it was a failed, or “insufficiently tested,” software upgrade, that brought down the Blackberries.

- Your Daily Awesome: Ira Glass on Storytelling: Four videos in which the This American Life host talks about his craft

Michael Wesch’s “Machine” video

Before the opening talk at the Web 2.0 Expo earlier this week, the conference organizers played Michael Wesch’s video-ode to the participatory Web, “The Machine is Us/ing Us”. Given the insider-y nature of the crowd, I have to assume that most of the attendees had already seen it — it had rocketed to blogospheric celebrity in early February. But I didn’t realize the guy who made the video, a professor of cultural anthropology from Kansas State University, was at the conference.

On Tuesday afternoon I literally stumbled upon his talk in the hallway (on a tip from my neighbor Tim Bishop); it was a part of the free, informal “Web2Open” parallel conference. Across the hall, a hubbub made it hard to hear Wesch — the Justin.tv people had set up camp there and needed to be asked to pipe down.

Wesch turns out to be a rare combination of ingenuous Web enthusiast and smart cultural critic. In my experience, the cultural critics are usually pickled in cynicism and the Web enthusiasts are often blinded to their technology’s drawbacks. Maybe the discipline of cultural anthropology has helped Wesch maintain some balance; or maybe his sheer distance from Silicon Valley-mania — whether in the flatlands of Kansas or the mountains of Papua New Guinea — has helped him find a fresh perspective.

The came-out-of-nowhere saga of Wesch’s video neatly serves to mirror its message about the generated-from-the-bottom-up nature of the Web. Wesch originally made the video, he explained, because he was writing a paper about Web 2.0 for anthropologists, trying to explain how new Web tools can transform the academic conversation. He created it “on the fly” using low-end tools. Its grammar, with its write-then-delete-and-rewrite rhythms, emerged as he made goofs and fixed them: “The mistakes were real, at first. Then I thought they were cool, and started to plan them.” The music was a track by a musician from the Ivory Coast that he found via Creative Commons. (Once the video became a hit, Wesch says, he got a moving e-mail from the musician, who said that he’d been about to give up his dreams of a life in music, but was now finding new opportunities thanks to the attention the video was sending his way.)

The video’s viral success took Wesch by surprise. He’d forwarded it to some colleagues in the IT department to make sure that he hadn’t erred in his definition of XML. They sent it around. It took a week to go ballistic.

At one point someone in the small audience asked Wesch a question about his field research in Papua New Guinea. He paused for a second, asking, “There’s about a two-hour lecture there, I’m not sure I can compress that into a five-minute answer — should I try?” I couldn’t help myself; I blurted, “Hey, you did the entire history of the Web in four minutes — go ahead!”

[tags]web 2.0, web 2.0 expo, michael wesch, the machine is us/ing us, viral video[/tags]

Why Gonzales may stick around

After his feeble attempt to defend his record of deception and cronyism before the Senate Judiciary Committee yesterday, Attorney General Alberto Gonzales must surely be polishing up his resume. Right? Well, under normal circumstances, that would be inarguable. But these aren’t normal circumstances at all.

Unless the Senate chooses to impeach Gonzales, the only person who can remove him from office is the man who selected him. So far, President Bush has remained steadfast behind his old friend. That’s no coincidence. The Justice Department is the tip of the iceberg; there are heaps more corruption and lies waiting to be aired throughout this administration, and now that the Democrats are running Congress, some of that information is beginning to come out.

Having a loyal “Bushie” fixer like Gonzales in the A.G. chair is the Bush crew’s chief defensive bulwark. Replacing him with a similar toady would, today, be impossible.

Under normal circumstances, given the revolt against Gonzales not only among Democrats but in his own party, a president would eventually bow to the inevitable; otherwise, he’d understand, he’d be unable to get anything else done for the remainder of his administration.

Here’s the catch: The Bush administration isn’t trying to get anything done any more. All these guys want to do is hang on for the next 18 months without ending up in jail or accepting the inevitability of defeat in Iraq. That’s it. How does firing Gonzales help them achieve those goals? While the Senate smolders over the arrogance and incompetence of Bush’s attorney general, the clock keeps ticking. The longer they deliberate over Gonzales, the less time they have to investigate everybody else.

I won’t be surprised if Gonzales survives a lot longer than pundits are presently predicting. Bush’s stubbornness isn’t just a character flaw; it’s a desperate by-the-fingernails defensive tactic.

Rick Kleffel interview, podcast

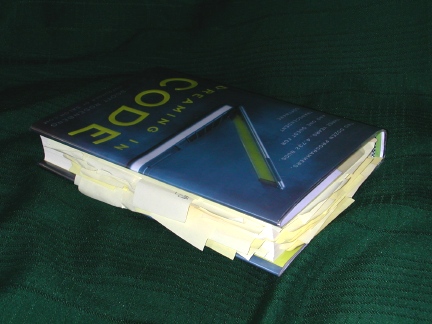

Rick Kleffel has a review site called the Agony Column, does a radio show for KUSP, and also podcasts, and sometimes contributes to NPR. So he keeps busy. I met him recently at KQED for an interview about Dreaming in Code, and he showed up with a copy of the book that bristled with more post-it-note bookmarks than I’d have thought physically possible:

It’s great that Kleffel liked my book enough to pay it this kind of close attention. He also recognized my effort to find, in the saga of a software project, some broader themes: for instance, how strange and difficult it is for groups of people to work together creating anything that’s abstract. He wrote: “The book, though it stays focused on software engineering, is clearly applicable to every other realm of life.”

Here’s Kleffel’s review; here’s the audio of our interview. A version of it is scheduled for broadcast on KUSP on Friday at around 10 AM, if you’re in the general Santa Cruz vicinity.

Schmidt on scaling Google

The first time I heard Eric Schmidt speak was in June 1995. I’d flown to Honolulu to cover the annual INET conference for the newspaper I then worked for. The Internet Society’s conclave was a sort of victory lap for the wizards and graybeards who’d designed the open network decades before and were finally witnessing its come-from-behind triumph over the proprietary online services. It was plain, at that point in time, that the Internet was going to be the foundation of future digital communications.

But it wasn’t necessarily clear how big it was going to get. In fact, at that event Schmidt predicted that the Internet would grow to 187 million hosts within 5 years. If I understand this chart at Netcraft properly, we actually reached that number only recently. (Netcraft tracks web hosts, so maybe I’m comparing apples and oranges).

I thought of this today at the Web 2.0 Expo, where Eric Schmidt, now Google’s CEO, talked on stage with John Battelle. (Dan Farber has a good summary.) He discussed Google’s new lightweight Web-based presentation app (the PowerPoint entry in Google’s app suite), the recent deal to acquire DoubleClick, and of Microsoft’s hilarious antitrust gripe about it, and of Google’s commitment to letting its users pack up their data and take it elsewhere (a commitment that remains theoretical — not a simple thing to deliver, but if anyone has the brainpower resources to make it happen, Google does).

But what struck me was a more philosophical point near the end. Battelle asked Schmidt what he thinks about when he first wakes up in the morning (I suppose this is a variant of the old “what keeps you up at night”). After joshing about doing his e-mail, Schmidt launched into a discourse on what he worries about these days: “scaling.”

It surprised me to hear this, since Google has been so successful at keeping up with the demands on its infrastructure — successful at building it smartly, and at funding it, too. Schmidt was also, of course, talking about “scaling” the company itself.

“When the Internet really took off in the mid 90s, a lot of people talked about the scale, of how big it would be,” Schmidt said. It was obvious at the time there’d be a handful of defining Net companies, and each would need a “scaling strategy.”

Mostly, though, he was remarking on “how early we are in the scaling of the Internet” itself: “We’re just at the beginning of getting all the information that has been kept in small networks and groups onto these platforms.”

Tim O’Reilly made a similar point at the conference kick-off: In the era of Web-based computing, he said, we’re still at the VisiCalc stage.

Google famously defines its mission as “to organize the world’s information and make it universally accessible and useful.” But the work of getting the universe of individual and small-group knowledge onto the Net is something Google can only aid. Ultimately, this work belongs to the millions of bloggers and photographers and YouTubers and users of services yet to be imagined who provide the grist for Google’s algorithmic mills.

I find it bracing and helpful to recall all this at a show like the Web 2.0 Expo — which, while rewarding in many ways, gives off a lot of mid-to-late dotcom-bubble fumes. Froth will come and go. The vast project of building, and scaling, a global information network to absorb everything we can throw into it — that remains essential. And for all the impressive dimensions of Google, and the oodles of Wikipedia pages, and the zillions of blogs, we’ve only just begun to post.

[tags]google, eric schmidt, internet growth, web 2.0, web 2.0 expo[/tags]

Toward a Mac migration

There are still three barriers standing between me and moving onto a Mac. Two are rapidly disappearing. (I was a Mac guy for years and shifted to a PC in the mid-’90s during Apple’s slump years, when the unreliability of the Mac OS and Mac hardware had me losing more data than I could stand.)

One is the availability of a true lightweight Apple laptop. Rumor has it that’s coming; it’s time for a Mac laptop that is slim, elegant and three pounds heavy, like the IBM/Lenovo X-class laptops I’ve been using forever. I’m sure Apple knows this and I can’t imagine waiting too much longer for such a device.

Second is the availability of a Quicken for the Mac that’s as good as Quicken for the PC. It seems plain that Intuit is never going to make this happen.

Third is that, for the moment at least, I’m still running my life and work with Ecco Pro, and it’s an old Windows app. There are modern Mac apps that do some of what Ecco does better than it does, but I’ve found none that does everything that Ecco does as well as it does, and it pains me to think of abandoning it.

In the age of Intel-based Macs it’s now quite easy to run Windows in parallel to your OS X. But Apple’s Boot Camp requires a reboot each time you want to go to your Windows app, and that’s a royal pain; Parallels doesn’t. But both approaches require that you spend $300 on another copy of Windows, and that’s an extraordinary amount to pay.

Last night I downloaded and tried out Crossover Mac, an application (based on the WINE project) that lets you run individual Windows apps from inside OS X (on an Intel-based Mac) without needing to install a second OS. The good news is that Crossover Mac worked apparently hitchlessly on Quicken 2005, which is one of a bevy of apps that Crossover officially supports. (I haven’t really pounded on it, and maybe heavy usage will uncover problems, but I’m impressed so far.)

So what I’m now wrestling with is: how to get Ecco Pro running under Crossover? The app is not officially supported (no surprise there!) and my “let’s give it a try anyway” install failed. Ecco is a solid Win32 application but it dates back to the mid-’90s so there might simply be too many archaic calls or idiosyncracies. I’d probably give up hope — but there are screenshots on the Crossover site of Ecco running successfully under Crossover/Linux. So I think there ought to be some hope here. I’m posting this largely as a beacon: Ecco Pro users! Crossover users! Can anything be done here?

I’m also pondering trying the Parallels route by using a Windows license from an older, diisused version of XP or Windows 2000; either of those runs Ecco perfectly. If I experiment with Parallels using this approach I’ll report on it.

[tags]ecco pro, crossover, parallels, windows on mac[/tags]

Kathy Sierra and the werewolves

I attended the O’Reilly Emerging Technology Conference, or ETech, once again this year, and, distracted by other projects, did a very poor job of blogging about it. (You can read about the excellent EFF-sponsored debate between Mark Cuban and Fred von Lohmann, on the YouTube/Viacom lawsuit, here and here; Raph Koster spoke about magic as the underlying structure of game-play; and Danah Boyd gave a wonderful talk titled “Incantations for Muggles,” about the relationship between technologist-wizards and the rest of the human race — Koster took notes on it.)

The conference, as you may have heard, was abuzz with discussion of the Kathy Sierra saga — she’d been booked as a kickoff keynote speaker, but cancelled at the last minute, understandably spooked by threatening comments posted on her site and a couple of other blogs.

Sierra’s plight set off an immediate and vast blogstorm. There was much introspection and self-questioning about the onslaught of invective, nastiness, vicious taunts and obscene threats that sometimes emerges online, and seems especially targeted at women; there was also something of a rush to judgment to point fingers at particular bloggers whose sites and posts might (or might not) have encouraged the posts that caused Sierra such grief.

A prodigious number of people seemed to feel they had to weigh in immediately on this ugly situation, though virtually no one (yes, including myself) seemed willing or able to take the time needed to explore, in detail, what had actually happened and who had done what. I still haven’t seen any fully reported-out piece on the events — the coverage in the S.F. Chronicle seemed creditable, but it didn’t unravel the toughest questions: who was stalking Sierra, and was there in fact any relationship at all between said stalker(s) and the well-known bloggers she called out in her wounded post?

Sitting in a conference without the time or resources to do any reporting of my own, I thought, shoot, there’s no way I can know enough about what happened to add anything to the conversation. Of course comments like those Sierra encountered are, and should be considered, beyond the pale; Sierra deserved sympathy and support. But the storm of anger and the rush to judgment her post sparked represented, I thought, a failure of forethought. Running a blog provides the constant temptation to shoot off at the mouth. Sometimes, though, when you just don’t know all the facts, considered silence is golden.

The irony here is that this was supposed to be ETech’s year of fun and games.

[Read more…]